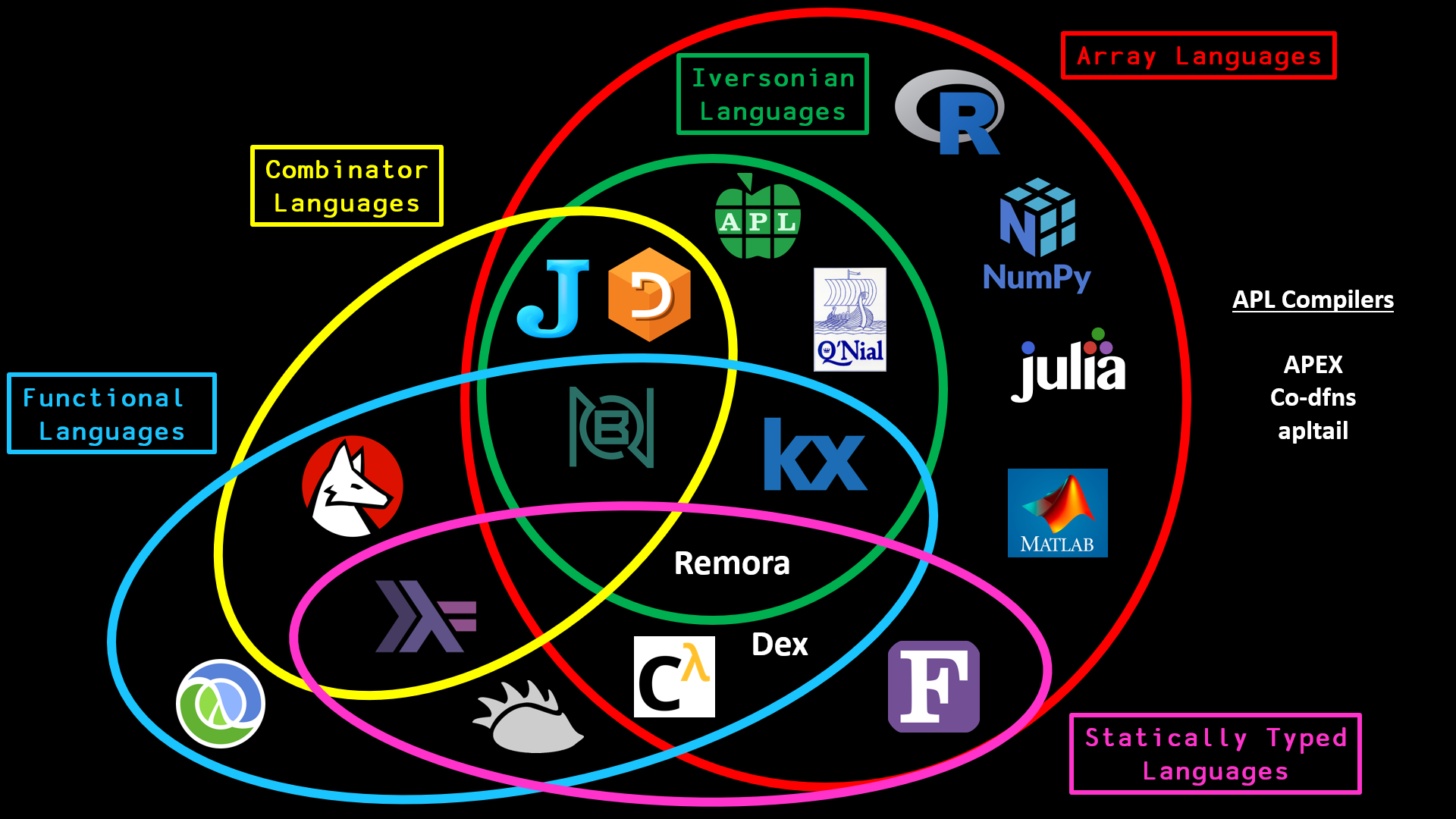

On the most recent episode of ArrayCast, we interviewed Troels Henriksen about the Futhark Programming Language. From the website, “Futhark is a statically typed, data-parallel, and purely functional array language in the ML family, and comes with a heavily optimising ahead-of-time compiler that presently generates either GPU code via CUDA and OpenCL, or multi-threaded CPU code.”

While talking to Troels about accelerating array languages, I asked what other languages and projects operate in the same space. This short blog post is basically highlighting the response we got from Troels.

- 🇩🇰 Futhark, a Haskell-inspired language out of DIKU at the University of Copenhagen

- 🏴 Single Assignment C (SaC), a C-inspired language out of Heriot-Watt University

- 🇺🇸 Dex, a research array language out of Google

- 🇺🇸 Co-dfns, an APL compiler out of Indiana University / Aaron Hsu

- 🇦🇺 Accelerate, a Haskell library out of University of New South Wales and NVIDIA

- 🇺🇸 Copperhead, a Python-inspired research language/compiler out of NVIDIA

On top of this, several other initiatives were mentioned, including:

- 🇩🇰 Typed Array Intermediate Language (TAIL), out of the University of Copenhagen

- 🇩🇰 apltail, an APL compiler targeting TAIL, out of the University of Copenhagen

- 🇺🇸 Remora, a typed array language out of Northeastern University

- 🇨🇦 APEX, a Dyalog APL compiler targeting SaC out of Snake Island Research

The following people have been or are actively involved in working on the above projects:

| Project | Individual* | GitHub | ArrayCast? | ||

|---|---|---|---|---|---|

| ✅ | Futhark | Troels Henriksen | GitHub | Episode 37 | |

| ✅ | Co-dfns | Aaron Hsu | - | GitHub | Episode 19 |

| ✅ | APEX | Bob Bernecky | - | GitHub | Episode 55 |

| ✅ | SaC | Sven-Bodo Scholz | - | GitHub | Episode 107 |

| SaC | Artem Shinkarov | - | GitHub | - | |

| Remora | Justin Slepak | - | GitHub | - | |

| TAIL/apltail | Martin Elsman | - | GitHub | - | |

| Copperhead | Bryan Catanzaro | GitHub | - | ||

| Accelerate | Manuel Chakravarty | GitHub | - | ||

| Accelerate | Trevor McDonell | - | GitHub | - | |

| ✅ | Dex/PyTorch | Adam Paszke | GitHub | Episode 58 | |

| Dex | Dougal Maclaurin | GitHub | - |

* Links to personal website

My plan is to try and have as many of the above folks on ArrayCast in the future. I will update this blog post with links whenever one of them appears.

Some honorable mention projects (some that didn’t get mentioned) but are relevant to the space are:

- JAX, Autograd and XLA, brought together for high-performance ML research

- XLA, a domain-specific compiler for linear algebra that targets CPUs, GPUs and TPUs

- PyTorch, tensors and dynamic neural networks in Python with strong GPU acceleration

- TensorFlow, an open source platform for machine learning

- Nx, multidimensional arrays (tensors) and numerical definitions for Elixir

- MLIR, a hybrid IR for building compiler infrastructure

- NESL, a parallel, functional, array programming language designed at CMU

- Nessie, a NESL compiler the generates CUDA code for NVIDIA GPUs

Other links of interest that I came across while compiling all the links for this blog are:

- A comparison of Futhark and Dex

- Array language compilation in context specifically the section on Typed Array Languages

- Futhark on ArrayCast

The last link was written a few days after the ArrayCast episode aired. Finally, you can find comparisons of different array languages at my Array Language Comparison GitHub repository, which is where the graphic at the top of the article is from.

Feel free to leave a comment on the reddit thread.